Filter by type:

Filter by tags:

qwen3.6-40b-claude-4.6-opus-deckard-heretic-uncensored-thinking-neo-code-di-imatrix-max

The Qwen 3.5 version (also 40B) got 181 likes+ This version uses the new Qwen 3.6 27B arch (which exceeds even Qwen's own 398B model).

WARNING: This model has character and intelligence. It will take no prisoners. It will give no quarter. Uncensored,

Unfiltered and boldly confident. Not even remotely "SFW", if you ask it for NSFW content. And it is wickedly smart too - exceeding the base model in 6 out of 7 benchmarks.

Qwen3.6-40B-Claude-4.6-Opus-Deckard-Heretic-Uncensored-Thinking

40 billion parameters (dense, not moe) expanded from 27B Qwen 3.6, then trained on Claude 4.6 Opus High Reasoning dataset via Unsloth on local hardware... but there

is much more to the story - in comes DECKARD.

96 layers, 1275 Tensors. (50% more than base model of 27B)

Features variable length reasoning ; less complex = shorter, longer for more complex.

Model performance has increased dramatically. And it has character too.

A lot of character.

No censorship, no nanny. (via Heretic)

And it is very, very smart.

...

Repository: localaiLicense: apache-2.0

qwopus3.6-35b-a3b-v1

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

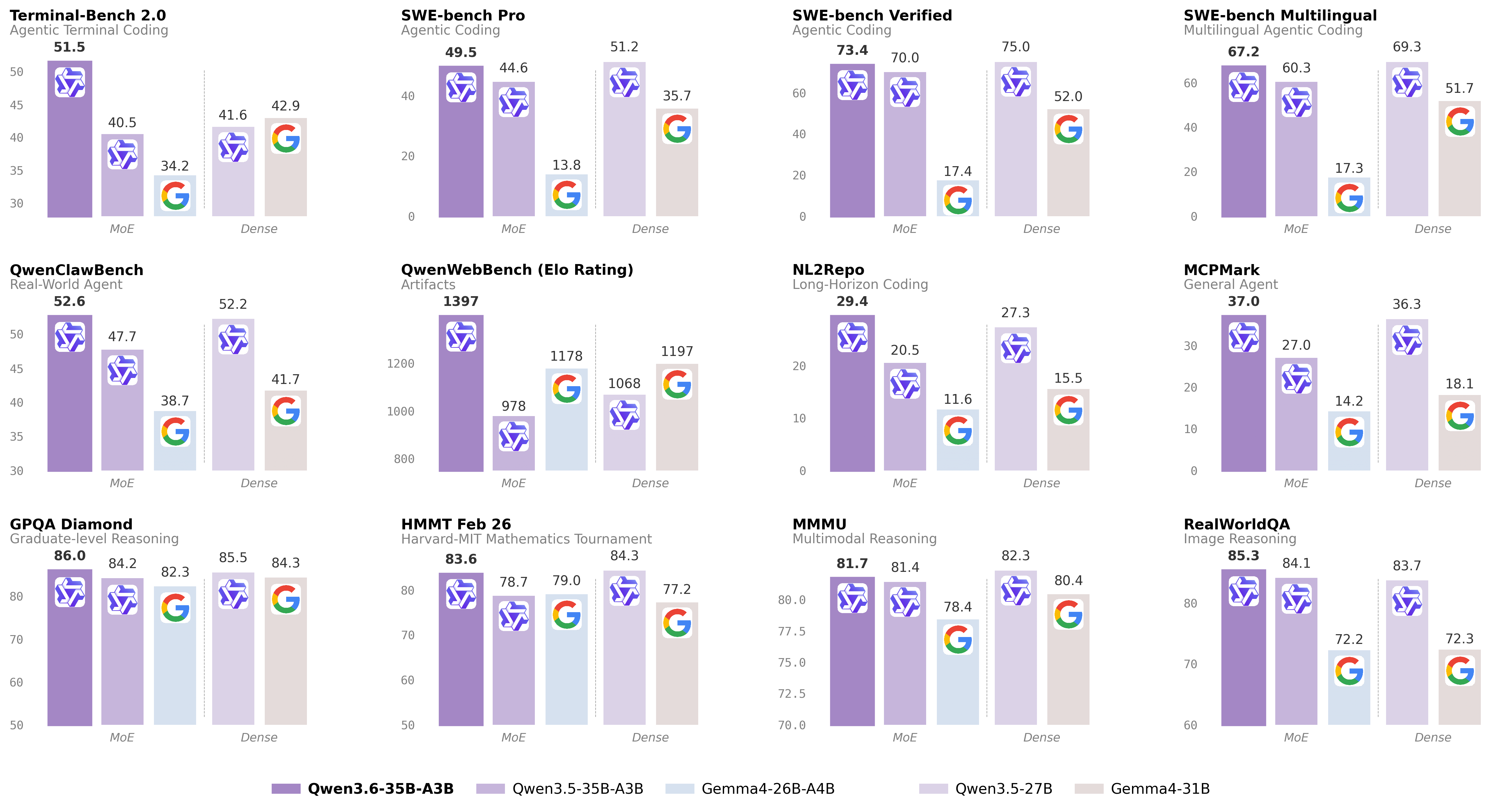

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.5-9b-deepseek-v4-flash

# Qwen3.5-9B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Over recent months, we have intensified our focus on developing foundation models that deliver exceptional utility and performance. Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

## Qwen3.5 Highlights

Qwen3.5 features the following enhancement:

- **Unified Vision-Language Foundation**: Early fusion training on multimodal tokens achieves cross-generational parity with Qwen3 and outperforms Qwen3-VL models across reasoning, coding, agents, and visual understanding benchmarks.

- **Efficient Hybrid Architecture**: Gated Delta Networks combined with sparse Mixture-of-Experts deliver high-throughput inference with minimal latency and cost overhead.

...

Repository: localaiLicense: apache-2.0

nemotron-3-nano-omni-30b-a3b-reasoning-apex

# Model Overview

### Description:

NVIDIA Nemotron 3 Nano Omni is a multimodal large language model that unifies video, audio, image, and text understanding to support enterprise-grade Q&A, summarization, transcription, and document intelligence workflows. It extends the Nemotron Nano family with integrated video+speech comprehension, Graphical User Interface (GUI), Optical Character Recognition (OCR), and speech transcription capabilities, enabling end-to-end processing of rich enterprise content such as meeting recordings, M&E assets, training videos, and complex business documents. NVIDIA Nemotron 3 Nano Omni was developed by NVIDIA as part of the Nemotron model family.

This model is available for commercial use.

This model was improved using Qwen3-VL-30B-A3B-Instruct, Qwen3.5-122B-A10B, Qwen3.5-397B-A17B, Qwen2.5-VL-72B-Instruct, and gpt-oss-120b. For more information, please see the Training Dataset section below.

### License/Terms of Use

Governing Terms: Use of this model is governed by the NVIDIA Open Model Agreement

### Deployment Geography:

Global

...

Repository: localaiLicense: other

carnice-v2-27b

# Carnice-V2-27B for Hermes Agent

Carnice-V2-27B is a full merged BF16 SFT of `Qwen/Qwen3.6-27B` for Hermes-style agent traces. This repository contains the standalone merged model weights, not only a LoRA adapter.

## BF16 Transformers Loading Fix

The BF16 safetensors were republished with corrected `Qwen3_5ForConditionalGeneration` tensor prefixes. The original merge artifact accidentally serialized an extra Unsloth wrapper prefix, which caused direct HF Transformers loads to report the real weights as unexpected keys and initialize expected layers randomly. GGUF files were not affected because the GGUF conversion path normalized those prefixes.

## Benchmarks

The benchmark artifact bundle is included under `benchmarks/`. It contains the rendered graph, extracted `metrics.json`, benchmark scripts, and raw result files used to make the chart.

Scope note: the IFEval run is a short `limit=20` A/B smoke benchmark, not an official full leaderboard score. Held-out loss/perplexity is the exact assistant-only training-format validation metric from the SFT script. The raw BFCL two-case smoke files are included for auditability, but they are too small to use as a model-quality claim.

...

Repository: localaiLicense: apache-2.0

qwopus3.6-27b-v1-preview

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-27b

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

supergemma4-26b-uncensored-v2

Hugging Face |

GitHub |

Launch Blog |

Documentation

License: Apache 2.0 | Authors: Google DeepMind

Gemma is a family of open models built by Google DeepMind. Gemma 4 models are multimodal, handling text and image input (with audio supported on small models) and generating text output. This release includes open-weights models in both pre-trained and instruction-tuned variants. Gemma 4 features a context window of up to 256K tokens and maintains multilingual support in over 140 languages.

Featuring both Dense and Mixture-of-Experts (MoE) architectures, Gemma 4 is well-suited for tasks like text generation, coding, and reasoning. The models are available in four distinct sizes: **E2B**, **E4B**, **26B A4B**, and **31B**. Their diverse sizes make them deployable in environments ranging from high-end phones to laptops and servers, democratizing access to state-of-the-art AI.

Gemma 4 introduces key **capability and architectural advancements**:

* **Reasoning** – All models in the family are designed as highly capable reasoners, with configurable thinking modes.

...

Repository: localaiLicense: gemma

qwen3.6-35b-a3b-apex

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3-vl-embedding-8b

**Model Name:** Qwen3-VL-Embedding-8B

**Base Model:** Qwen/Qwen3-VL-8B-Instruct

**Description:**

The **Qwen3-VL-Embedding** and **Qwen3-VL-Reranker** model series are the latest additions to the Qwen family, built upon the recently open-sourced and powerful Qwen3-VL foundation model. Specifically designed for multimodal information retrieval and cross-modal understanding, this suite accepts diverse inputs including text, images, screenshots, and videos, as well as inputs containing a mixture of these modalities.

**Key Features:**

- Model Type: MultiModal Embedding

- Supported Languages: 30+ Languages

- Supported Input Modalities: Text, images, screenshots, videos, and arbitrary multimodal combinations (e.g., text + image, text + video)

- Number of Parameters: 8B

- Context Length: 32k

- Embedding Dimension: Up to 4096, supports user-defined output dimensions ranging from 64 to 4096

**Downloads:**

- [GGUF Files](https://huggingface.co/Qwen/Qwen3-VL-Embedding-8B) (e.g., `Qwen3-VL-Embedding-8B-Q8_0.gguf`).

**Usage:**

- Requires `transformers`, `qwen-vl-utils`, and `torch`.

- Example: `from scripts.qwen3_vl_embedding import Qwen3VLEmbedder model = Qwen3VLEmbedder(...)`

**Citation:**

@article{qwen3vlembedding, ...}

This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

Repository: localaiLicense: apache-2.0

qwen3-vl-embedding-2b

**Model Name:** Qwen3-VL-Embedding-2B

**Base Model:** Qwen/Qwen3-VL-2B-Instruct

**Description:**

The **Qwen3-VL-Embedding** and **Qwen3-VL-Reranker** model series are the latest additions to the Qwen family, built upon the recently open-sourced and powerful Qwen3-VL foundation model. Specifically designed for multimodal information retrieval and cross-modal understanding, this suite accepts diverse inputs including text, images, screenshots, and videos, as well as inputs containing a mixture of these modalities.

**Key Features:**

- Model Type: MultiModal Embedding

- Supported Languages: 30+ Languages

- Supported Input Modalities: Text, images, screenshots, videos, and arbitrary multimodal combinations (e.g., text + image, text + video)

- Number of Parameters: 2B

- Context Length: 32k

- Embedding Dimension: Up to 2048, supports user-defined output dimensions ranging from 64 to 2048

**Downloads:**

- [GGUF Files](https://huggingface.co/Qwen/Qwen3-VL-Embedding-2B) (e.g., `Qwen3-VL-Embedding-2B-Q8_0.gguf`).

**Usage:**

- Requires `transformers`, `qwen-vl-utils`, and `torch`.

- Example: `from scripts.qwen3_vl_embedding import Qwen3VLEmbedder model = Qwen3VLEmbedder(...)`

**Citation:**

@article{qwen3vlembedding, ...}

This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

Repository: localaiLicense: apache-2.0

qwen3-vl-reranker-8b

**Model Name:** Qwen3-VL-Reranker-8B

**Base Model:** Qwen/Qwen3-VL-Reranker-8B

**Description:**

A high-performance multimodal reranking model for state-of-the-art cross-modal search. It supports 30+ languages and handles text, images, screenshots, videos, and mixed modalities. With 8B parameters and a 32K context length, it refines retrieval results by combining embedding vectors with precise relevance scores. Optimized for efficiency, it supports quantized versions (e.g., Q8_0, Q4_K_M) and is ideal for applications requiring accurate multimodal content matching.

**Key Features:**

- **Multimodal**: Text, images, videos, and mixed content.

- **Language Support**: 30+ languages.

- **Quantization**: Available in Q8_0 (best quality), Q4_K_M (fast, recommended), and lower-precision options.

- **Performance**: Outperforms base models in retrieval tasks (e.g., JinaVDR, ViDoRe v3).

- **Use Case**: Enhances search pipelines by refining embeddings with precise relevance scores.

**Downloads:**

- [GGUF Files](https://huggingface.co/mradermacher/Qwen3-VL-Reranker-8B-GGUF) (e.g., `Qwen3-VL-Reranker-8B.Q8_0.gguf`).

**Usage:**

- Requires `transformers`, `qwen-vl-utils`, and `torch`.

- Example: `from scripts.qwen3_vl_reranker import Qwen3VLReranker; model = Qwen3VLReranker(...)`

**Citation:**

@article{qwen3vlembedding, ...}

This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

Repository: localaiLicense: apache-2.0

qwen3-vl-reranker-2b-i1

**Model Name:** Qwen3-VL-Reranker-2B-i1

**Base Model:** Qwen/Qwen3-VL-Reranker-2B

**Description:**

A high-performance multimodal reranking model for state-of-the-art cross-modal search. It supports 30+ languages and handles text, images, screenshots, videos, and mixed modalities. With 8B parameters and a 32K context length, it refines retrieval results by combining embedding vectors with precise relevance scores. Optimized for efficiency, it supports quantized versions (e.g., Q8_0, Q4_K_M) and is ideal for applications requiring accurate multimodal content matching.

**Key Features:**

- **Multimodal**: Text, images, videos, and mixed content.

- **Language Support**: 30+ languages.

- **Quantization**: Available in Q8_0 (best quality), Q4_K_M (fast, recommended), and lower-precision options.

- **Performance**: Outperforms base models in retrieval tasks (e.g., JinaVDR, ViDoRe v3).

- **Use Case**: Enhances search pipelines by refining embeddings with precise relevance scores.

**Downloads:**

- [GGUF Files](https://huggingface.co/mradermacher/Qwen3-VL-Reranker-2B-i1-GGUF) (e.g., `Qwen3-VL-Reranker-2B.i1-Q4_K_M.gguf`).

**Usage:**

- Requires `transformers`, `qwen-vl-utils`, and `torch`.

- Example: `from scripts.qwen3_vl_reranker import Qwen3VLReranker; model = Qwen3VLReranker(...)`

**Citation:**

@article{qwen3vlembedding, ...}

This description emphasizes its capabilities, efficiency, and versatility for multimodal search tasks.

Repository: localaiLicense: apache-2.0

rwkv7-g1c-13.3b

The model is **RWKV7 g1c 13B**, a large language model optimized for efficiency. It is quantized using **Bartowski's calibrationv5 for imatrix** to reduce memory usage while maintaining performance. The base model is **BlinkDL/rwkv7-g1**, and this version is tailored for text-generation tasks. It balances accuracy and efficiency, making it suitable for deployment in various applications.

Repository: localaiLicense: apache-2.0

iquest-coder-v1-40b-instruct-i1

The **IQuest-Coder-V1-40B-Instruct-i1-GGUF** is a quantized version of the original **IQuestLab/IQuest-Coder-V1-40B-Instruct** model, designed for efficient deployment. It is an **instruction-following large language model** with 40 billion parameters, optimized for tasks like code generation and reasoning.

**Key Features:**

- **Size:** 40B parameters (quantized for efficiency).

- **Purpose:** Instruction-based coding and reasoning.

- **Format:** GGUF (supports multi-part files).

- **Quantization:** Uses advanced techniques (e.g., IQ3_M, Q4_K_M) for balance between performance and quality.

**Available Quantizations:**

- Optimized for speed and size: **i1-Q4_K_M** (recommended).

- Lower-quality options for trade-off between size/quality.

**Note:** This is a **quantized version** of the original model, but the base model (IQuestLab/IQuest-Coder-V1-40B-Instruct) is the official source. For full functionality, use the unquantized version or verify compatibility with your deployment tools.

Repository: localaiLicense: iquestcoder

allenai_olmo-3.1-32b-think

The **Olmo-3.1-32B-Think** model is a large language model (LLM) optimized for efficient inference using quantized versions. It is a quantized version of the original **allenai/Olmo-3.1-32B-Think** model, developed by **bartowski** using the **imatrix** quantization method.

### Key Features:

- **Base Model**: `allenai/Olmo-3.1-32B-Think` (unquantized version).

- **Quantized Versions**: Available in multiple formats (e.g., `Q6_K_L`, `Q4_1`, `bf16`) with varying precision (e.g., Q8_0, Q6_K_L, Q5_K_M). These are derived from the original model using the **imatrix calibration dataset**.

- **Performance**: Optimized for low-memory usage and efficient inference on GPUs/CPUs. Recommended quantization types include `Q6_K_L` (near-perfect quality) or `Q4_K_M` (default, balanced performance).

- **Downloads**: Available via Hugging Face CLI. Split into multiple files if needed for large models.

- **License**: Apache-2.0.

### Recommended Quantization:

- Use `Q6_K_L` for highest quality (near-perfect performance).

- Use `Q4_K_M` for balanced performance and size.

- Avoid lower-quality options (e.g., `Q3_K_S`) unless specific hardware constraints apply.

This model is ideal for deploying on GPUs/CPUs with limited memory, leveraging efficient quantization for practical use cases.

Repository: localaiLicense: apache-2.0

opengvlab_internvl3_5-30b-a3b

We introduce InternVL3.5, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the Cascade Reinforcement Learning (Cascade RL) framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a Visual Resolution Router (ViR) that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled Vision-Language Deployment (DvD) strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0% gain in overall reasoning performance and a 4.05 ×\times× inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

Repository: localaiLicense: apache-2.0

Page 1