Filter by type:

Filter by tags:

qwopus3.6-35b-a3b-v1

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

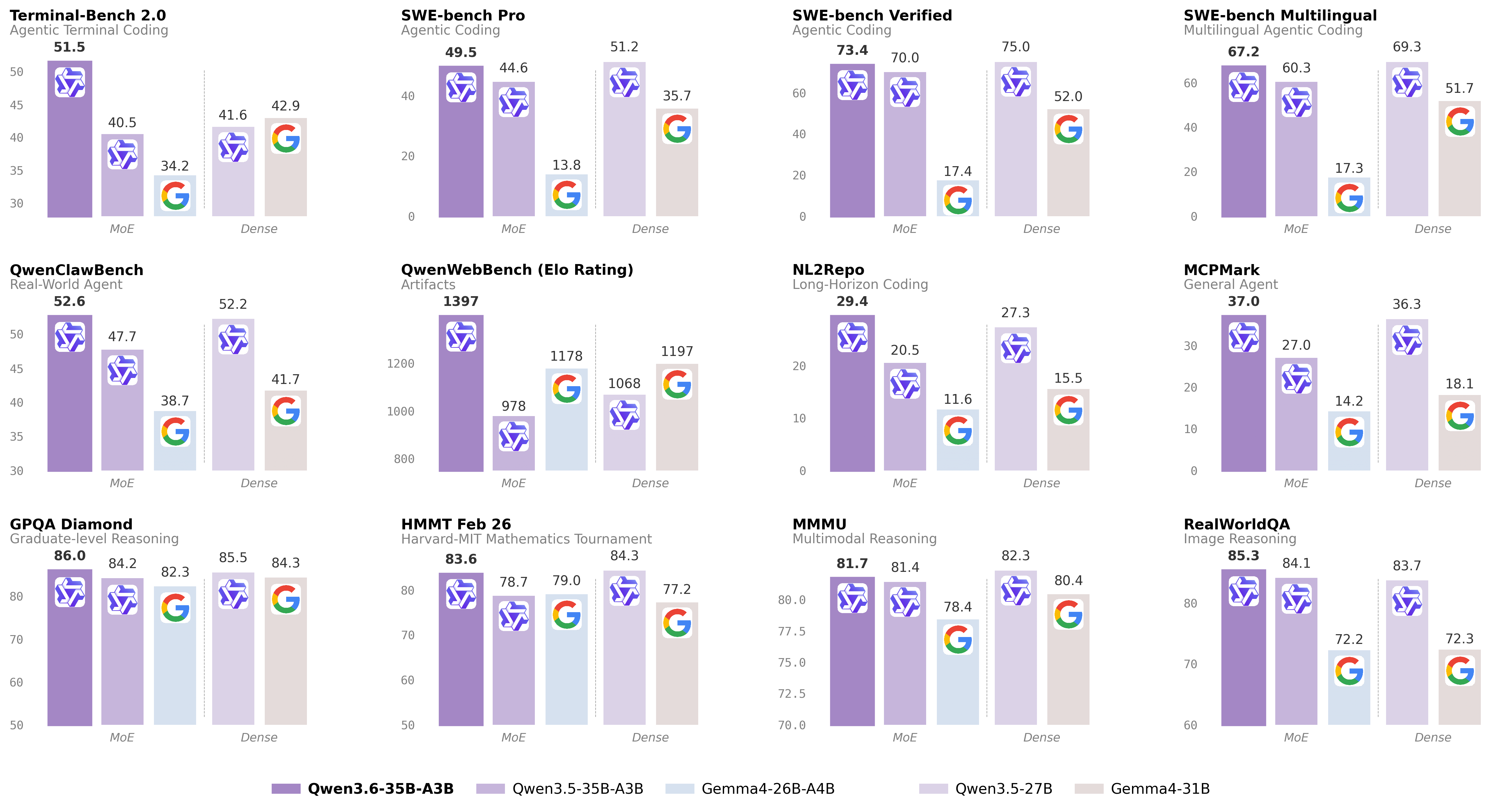

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.5-9b-deepseek-v4-flash

# Qwen3.5-9B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Over recent months, we have intensified our focus on developing foundation models that deliver exceptional utility and performance. Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

## Qwen3.5 Highlights

Qwen3.5 features the following enhancement:

- **Unified Vision-Language Foundation**: Early fusion training on multimodal tokens achieves cross-generational parity with Qwen3 and outperforms Qwen3-VL models across reasoning, coding, agents, and visual understanding benchmarks.

- **Efficient Hybrid Architecture**: Gated Delta Networks combined with sparse Mixture-of-Experts deliver high-throughput inference with minimal latency and cost overhead.

...

Repository: localaiLicense: apache-2.0

nemotron-3-nano-omni-30b-a3b-reasoning-apex

# Model Overview

### Description:

NVIDIA Nemotron 3 Nano Omni is a multimodal large language model that unifies video, audio, image, and text understanding to support enterprise-grade Q&A, summarization, transcription, and document intelligence workflows. It extends the Nemotron Nano family with integrated video+speech comprehension, Graphical User Interface (GUI), Optical Character Recognition (OCR), and speech transcription capabilities, enabling end-to-end processing of rich enterprise content such as meeting recordings, M&E assets, training videos, and complex business documents. NVIDIA Nemotron 3 Nano Omni was developed by NVIDIA as part of the Nemotron model family.

This model is available for commercial use.

This model was improved using Qwen3-VL-30B-A3B-Instruct, Qwen3.5-122B-A10B, Qwen3.5-397B-A17B, Qwen2.5-VL-72B-Instruct, and gpt-oss-120b. For more information, please see the Training Dataset section below.

### License/Terms of Use

Governing Terms: Use of this model is governed by the NVIDIA Open Model Agreement

### Deployment Geography:

Global

...

Repository: localaiLicense: other

qwopus3.6-27b-v1-preview

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-27b

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b-apex

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

gemma-4-e2b-it

Google Gemma 4 E2B-IT is a lightweight open-source multimodal model with 5B total parameters and 2B effective parameters using selective parameter activation. It handles text and image input, generating text output, with a 256K context window and support for 140+ languages. Optimized for efficient execution on low-resource devices including mobile and laptops.

Repository: localaiLicense: apache-2.0

z-image-diffusers

Z-Image is the foundation model of the ⚡️-Image family, engineered for good quality, robust generative diversity, broad stylistic coverage, and precise prompt adherence. While Z-Image-Turbo is built for speed, Z-Image is a full-capacity, undistilled transformer designed to be the backbone for creators, researchers, and developers who require the highest level of creative freedom.

Repository: localaiLicense: apache-2.0

z-image-turbo-diffusers

🚀 Z-Image-Turbo – A distilled version of Z-Image that matches or exceeds leading competitors with only 8 NFEs (Number of Function Evaluations). It offers ⚡️sub-second inference latency⚡️ on enterprise-grade H800 GPUs and fits comfortably within 16G VRAM consumer devices. It excels in photorealistic image generation, bilingual text rendering (English & Chinese), and robust instruction adherence.

Repository: localaiLicense: apache-2.0

huihui-glm-4.7-flash-abliterated-i1

The model is a quantized version of **huihui-ai/Huihui-GLM-4.7-Flash-abliterated**, optimized for efficiency and deployment. It uses GGUF files with various quantization levels (e.g., IQ1_M, IQ2_XXS, Q4_K_M) and is designed for tasks requiring low-resource deployment. Key features include:

- **Base Model**: Huihui-GLM-4.7-Flash-abliterated (unmodified, original model).

- **Quantization**: Supports IQ1_M to Q4_K_M, balancing accuracy and efficiency.

- **Use Cases**: Suitable for applications needing lightweight inference, such as edge devices or resource-constrained environments.

- **Downloads**: Available in GGUF format with varying quality and size (e.g., 0.2GB to 18.2GB).

- **Tags**: Abliterated, uncensored, and optimized for specific tasks.

This model is a modified version of the original GLM-4.7, tailored for deployment with quantized weights.

Repository: localaiLicense: mit

tildeopen-30b-instruct-lv-i1

The **TildeOpen-30B-Instruct-LV-i1-GGUF** is a quantized version of the base model **pazars/TildeOpen-30B-Instruct-LV**, optimized for deployment. It is an instruct-based language model trained on diverse datasets, supporting multiple languages (en, de, fr, pl, ru, it, pt, cs, nl, es, fi, tr, hu, bg, uk, bs, hr, da, et, lt, ro, sk, sl, sv, no, lv, sr, sq, mk, is, mt, ga). Licensed under CC-BY-4.0, it uses the Transformers library and is designed for efficient inference. The quantized version (with imatrix format) is tailored for deployment on devices with limited resources, while the base model remains the original, high-quality version.

Repository: localaiLicense: cc-by-4.0

allenai_olmo-3.1-32b-think

The **Olmo-3.1-32B-Think** model is a large language model (LLM) optimized for efficient inference using quantized versions. It is a quantized version of the original **allenai/Olmo-3.1-32B-Think** model, developed by **bartowski** using the **imatrix** quantization method.

### Key Features:

- **Base Model**: `allenai/Olmo-3.1-32B-Think` (unquantized version).

- **Quantized Versions**: Available in multiple formats (e.g., `Q6_K_L`, `Q4_1`, `bf16`) with varying precision (e.g., Q8_0, Q6_K_L, Q5_K_M). These are derived from the original model using the **imatrix calibration dataset**.

- **Performance**: Optimized for low-memory usage and efficient inference on GPUs/CPUs. Recommended quantization types include `Q6_K_L` (near-perfect quality) or `Q4_K_M` (default, balanced performance).

- **Downloads**: Available via Hugging Face CLI. Split into multiple files if needed for large models.

- **License**: Apache-2.0.

### Recommended Quantization:

- Use `Q6_K_L` for highest quality (near-perfect performance).

- Use `Q4_K_M` for balanced performance and size.

- Avoid lower-quality options (e.g., `Q3_K_S`) unless specific hardware constraints apply.

This model is ideal for deploying on GPUs/CPUs with limited memory, leveraging efficient quantization for practical use cases.

Repository: localaiLicense: apache-2.0

glm-4.5v-i1

The model in question is a **quantized version** of the **GLM-4.5V** large language model, originally developed by **zai-org**. This repository provides multiple quantized variants of the model, optimized for different trade-offs between size, speed, and quality. The base model, **GLM-4.5V**, is a multilingual (Chinese/English) large language model, and this quantized version is designed for efficient inference on hardware with limited memory.

Key features include:

- **Quantization options**: IQ2_M, Q2_K, Q4_K_M, IQ3_M, IQ4_XS, etc., with sizes ranging from 43 GB to 96 GB.

- **Performance**: Optimized for inference, with some variants (e.g., Q4_K_M) balancing speed and quality.

- **Vision support**: The model is a vision model, with mmproj files available in the static repository.

- **License**: MIT-licensed.

This quantized version is ideal for applications requiring compact, efficient models while retaining most of the original capabilities of the base GLM-4.5V.

Repository: localaiLicense: mit

ai21labs_ai21-jamba-reasoning-3b

AI21’s Jamba Reasoning 3B is a top-performing reasoning model that packs leading scores on intelligence benchmarks and highly-efficient processing into a compact 3B build.

The hybrid design combines Transformer attention with Mamba (a state-space model). Mamba layers are more efficient for sequence processing, while attention layers capture complex dependencies. This mix reduces memory overhead, improves throughput, and makes the model run smoothly on laptops, GPUs, and even mobile devices, while maintainig impressive quality.

Repository: localaiLicense: apache-2.0

ibm-granite_granite-4.0-h-small

Granite-4.0-H-Small is a 32B parameter long-context instruct model finetuned from Granite-4.0-H-Small-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

Repository: localaiLicense: apache-2.0

ibm-granite_granite-4.0-h-tiny

Granite-4.0-H-Tiny is a 7B parameter long-context instruct model finetuned from Granite-4.0-H-Tiny-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

Repository: localaiLicense: apache-2.0

ibm-granite_granite-4.0-h-micro

Granite-4.0-H-Micro is a 3B parameter long-context instruct model finetuned from Granite-4.0-H-Micro-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

Repository: localaiLicense: apache-2.0

ibm-granite_granite-4.0-micro

Granite-4.0-Micro is a 3B parameter long-context instruct model finetuned from Granite-4.0-Micro-Base using a combination of open source instruction datasets with permissive license and internally collected synthetic datasets. This model is developed using a diverse set of techniques with a structured chat format, including supervised finetuning, model alignment using reinforcement learning, and model merging. Granite 4.0 instruct models feature improved instruction following (IF) and tool-calling capabilities, making them more effective in enterprise applications.

Repository: localaiLicense: apache-2.0

Page 1