Filter by type:

Filter by tags:

qwen3.6-35b-a3b-claude-4.6-opus-reasoning-distilled

# 🔥 Qwen3.6-35B-A3B-Claude-4.6-Opus-Reasoning-Distilled

A reasoning SFT fine-tune of `Qwen/Qwen3.6-35B-A3B` on chain-of-thought (CoT) distillation mostly sourced from Claude Opus 4.6. The goal is to preserve Qwen3.6's strong agentic coding and reasoning base while nudging the model toward structured Claude Opus-style reasoning traces and more stable long-form problem solving.

The training path is text-only. The Qwen3.6 base architecture includes a vision encoder, but this fine-tuning run did not train on image or video examples.

- **Developed by:** @hesamation

- **Base model:** `Qwen/Qwen3.6-35B-A3B`

- **License:** apache-2.0

This fine-tuning run is inspired by Jackrong/Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled, including the notebook/training workflow style and Claude Opus reasoning-distillation direction.

[](https://x.com/Hesamation) [](https://discord.gg/vtJykN3t)

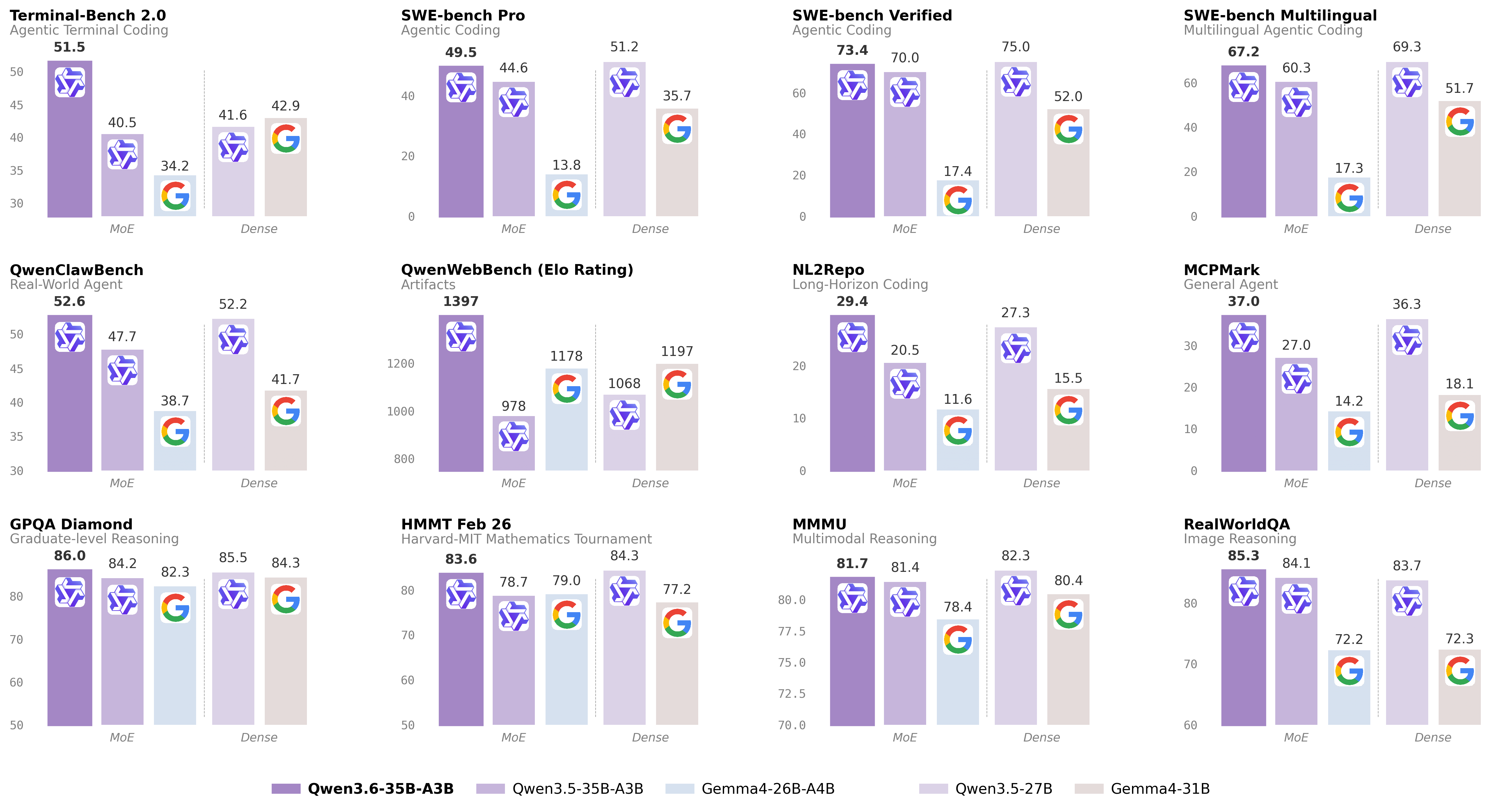

## Benchmark Results

The MMLU-Pro pass used 70 total questions per model: `--limit 5` across 14 MMLU-Pro subjects. Treat this as a smoke/comparative check, not a release-quality full benchmark.

...

Repository: localaiLicense: apache-2.0

nanbeige4.1-3b-q8

Nanbeige4.1-3B is built upon Nanbeige4-3B-Base and represents an enhanced iteration of our previous reasoning model, Nanbeige4-3B-Thinking-2511, achieved through further post-training optimization with supervised fine-tuning (SFT) and reinforcement learning (RL). As a highly competitive open-source model at a small parameter scale, Nanbeige4.1-3B illustrates that compact models can simultaneously achieve robust reasoning, preference alignment, and effective agentic behaviors.

Key features:

Strong Reasoning: Capable of solving complex, multi-step problems through sustained and coherent reasoning within a single forward pass, reliably producing correct answers on benchmarks like LiveCodeBench-Pro, IMO-Answer-Bench, and AIME 2026 I.

Robust Preference Alignment: Outperforms same-scale models (e.g., Qwen3-4B-2507, Nanbeige4-3B-2511) and larger models (e.g., Qwen3-30B-A3B, Qwen3-32B) on Arena-Hard-v2 and Multi-Challenge.

Agentic Capability: First general small model to natively support deep-search tasks and sustain complex problem-solving with >500 rounds of tool invocations; excels in benchmarks like xBench-DeepSearch (75), Browse-Comp (39), and others.

Repository: localaiLicense: apache-2.0

nanbeige4.1-3b-q4

Nanbeige4.1-3B is built upon Nanbeige4-3B-Base and represents an enhanced iteration of our previous reasoning model, Nanbeige4-3B-Thinking-2511, achieved through further post-training optimization with supervised fine-tuning (SFT) and reinforcement learning (RL). As a highly competitive open-source model at a small parameter scale, Nanbeige4.1-3B illustrates that compact models can simultaneously achieve robust reasoning, preference alignment, and effective agentic behaviors.

Key features:

Strong Reasoning: Capable of solving complex, multi-step problems through sustained and coherent reasoning within a single forward pass, reliably producing correct answers on benchmarks like LiveCodeBench-Pro, IMO-Answer-Bench, and AIME 2026 I.

Robust Preference Alignment: Outperforms same-scale models (e.g., Qwen3-4B-2507, Nanbeige4-3B-2511) and larger models (e.g., Qwen3-30B-A3B, Qwen3-32B) on Arena-Hard-v2 and Multi-Challenge.

Agentic Capability: First general small model to natively support deep-search tasks and sustain complex problem-solving with >500 rounds of tool invocations; excels in benchmarks like xBench-DeepSearch (75), Browse-Comp (39), and others.

Repository: localaiLicense: apache-2.0

speechbrain-ecapa-tdnn

Speaker (voice) recognition with SpeechBrain's ECAPA-TDNN trained

on VoxCeleb. 192-d L2-normalised embeddings, ~1.9% Equal Error

Rate on VoxCeleb1-O. APACHE 2.0 — commercial-safe.

The checkpoint is auto-downloaded from HuggingFace on first

LoadModel (no separate weight file in gallery `files:`). Points at

the upstream SpeechBrain HF repo directly — same bytes every

deployment.

Repository: localaiLicense: apache-2.0

edgetam

EdgeTAM is an ultra-efficient variant of the Segment Anything Model (SAM) for image segmentation.

It uses a RepViT backbone and is only ~16MB quantized (Q4_0), making it ideal for edge deployment.

Supports point-prompted and box-prompted image segmentation via the /v1/detection endpoint.

Powered by sam3.cpp (C/C++ with GGML).

Repository: localaiLicense: apache-2.0

fast-math-qwen3-14b

By applying SFT and GRPO on difficult math problems, we enhanced the performance of DeepSeek-R1-Distill-Qwen-14B and developed Fast-Math-R1-14B, which achieves approx. 30% faster inference on average, while maintaining accuracy.

In addition, we trained and open-sourced Fast-Math-Qwen3-14B, an efficiency-optimized version of Qwen3-14B`, following the same approach.

Compared to Qwen3-14B, this model enables approx. 65% faster inference on average, with minimal loss in performance.

Technical details can be found in our github repository.

Note: This model likely inherits the ability to perform inference in TIR mode from the original model. However, all of our experiments were conducted in CoT mode, and its performance in TIR mode has not been evaluated.

Repository: localaiLicense: apache-2.0

vulpecula-4b

**Vulpecula-4B** is fine-tuned based on the traces of **SK1.1**, consisting of the same 1,000 entries of the **DeepSeek thinking trajectory**, along with fine-tuning on **Fine-Tome 100k** and **Open Math Reasoning** datasets. This specialized 4B parameter model is designed for enhanced mathematical reasoning, logical problem-solving, and structured content generation, optimized for precision and step-by-step explanation.

Repository: localaiLicense: apache-2.0

qwen3-55b-a3b-total-recall-deep-40x

WARNING: MADNESS - UN HINGED and... NSFW. Vivid prose. INTENSE. Visceral Details. Violence. HORROR. GORE. Swearing. UNCENSORED... humor, romance, fun.

Qwen3-55B-A3B-TOTAL-RECALL-Deep-40X-GGUF

A highly experimental model ("tamer" versions below) based on Qwen3-30B-A3B (MOE, 128 experts, 8 activated), with Brainstorm 40X (by DavidAU - details at bottom of this page).

These modifications blow the model (V1) out to 87 layers, 1046 tensors and 55B parameters.

Note that some versions are smaller than this, with fewer layers/tensors and smaller parameter counts.

The adapter extensively alters performance, reasoning and output generation.

Exceptional changes in creative, prose and general performance.

Regens of the same prompt - even with the same settings - will be very different.

THREE example generations below - creative (generated with Q3_K_M, V1 model).

ONE example generation (#4) - non creative (generated with Q3_K_M, V1 model).

You can run this model on CPU and/or GPU due to unique model construction, size of experts and total activated experts at 3B parameters (8 experts), which translates into roughly almost 6B parameters in this version.

Two quants uploaded for testing: Q3_K_M, Q4_K_M

V3, V4 and V5 are also available in these two quants.

V2 and V6 in Q3_k_m only; as are: V 1.3, 1.4, 1.5, 1.7 and V7 (newest)

NOTE: V2 and up are from source model 2, V1 and 1.3,1.4,1.5,1.7 are from source model 1.

Repository: localaiLicense: apache-2.0

qwen3-42b-a3b-stranger-thoughts-deep20x-abliterated-uncensored-i1

WARNING: NSFW. Vivid prose. INTENSE. Visceral Details. Violence. HORROR. GORE. Swearing. UNCENSORED... humor, romance, fun.

Qwen3-42B-A3B-Stranger-Thoughts-Deep20x-Abliterated-Uncensored

This repo contains the full precision source code, in "safe tensors" format to generate GGUFs, GPTQ, EXL2, AWQ, HQQ and other formats. The source code can also be used directly.

ABOUT:

Qwen's excellent "Qwen3-30B-A3B", abliterated by "huihui-ai" then combined Brainstorm 20x (tech notes at bottom of the page) in a MOE (128 experts) at 42B parameters (up from 30B).

This pushes Qwen's abliterated/uncensored model to the absolute limit for creative use cases.

Prose (all), reasoning, thinking ... all will be very different from reg "Qwen 3s".

This model will generate horror, fiction, erotica, - you name it - in vivid, stark detail.

It will NOT hold back.

Likewise, regen(s) of the same prompt - even at the same settings - will create very different version(s) too.

See FOUR examples below.

Model retains full reasoning, and output generation of a Qwen3 MOE ; but has not been tested for "non-creative" use cases.

Model is set with Qwen's default config:

40 k context

8 of 128 experts activated.

Chatml OR Jinja Template (embedded)

IMPORTANT:

See usage guide / repo below to get the most out of this model, as settings are very specific.

USAGE GUIDE:

Please refer to this model card for

Specific usage, suggested settings, changing ACTIVE EXPERTS, templates, settings and the like:

How to maximize this model in "uncensored" form, with specific notes on "abliterated" models.

Rep pen / temp settings specific to getting the model to perform strongly.

https://huggingface.co/DavidAU/Qwen3-18B-A3B-Stranger-Thoughts-Abliterated-Uncensored-GGUF

GGUF / QUANTS / SPECIAL SHOUTOUT:

Special thanks to team Mradermacher for making the quants!

https://huggingface.co/mradermacher/Qwen3-42B-A3B-Stranger-Thoughts-Deep20x-Abliterated-Uncensored-GGUF

KNOWN ISSUES:

Model may "mis-capitalize" word(s) - lowercase, where uppercase should be - from time to time.

Model may add extra space from time to time before a word.

Incorrect template and/or settings will result in a drop in performance / poor performance.

Repository: localaiLicense: apache-2.0

qwen3-22b-a3b-the-harley-quinn

WARNING: MADNESS - UN HINGED and... NSFW. Vivid prose. INTENSE. Visceral Details. Violence. HORROR. GORE. Swearing. UNCENSORED... humor, romance, fun.

Qwen3-22B-A3B-The-Harley-Quinn

This repo contains the full precision source code, in "safe tensors" format to generate GGUFs, GPTQ, EXL2, AWQ, HQQ and other formats. The source code can also be used directly.

ABOUT:

A stranger, yet radically different version of Kalmaze's "Qwen/Qwen3-16B-A3B" with the experts pruned to 64 (from 128, the Qwen 3 30B-A3B version) and then I added 19 layers expanding (Brainstorm 20x by DavidAU info at bottom of this page) the model to 22B total parameters.

The goal: slightly alter the model, to address some odd creative thinking and output choices.

Then... Harley Quinn showed up, and then it was a party!

A wild, out of control (sometimes) but never boring party.

Please note that the modifications affect the entire model operation; roughly I adjusted the model to think a little "deeper" and "ponder" a bit - but this is a very rough description.

That being said, reasoning and output generation will be altered regardless of your use case(s).

These modifications pushes Qwen's model to the absolute limit for creative use cases.

Detail, vividiness, and creativity all get a boost.

Prose (all) will also be very different from "default" Qwen3.

Likewise, regen(s) of the same prompt - even at the same settings - will create very different version(s) too.

The Brainstrom 20x has also lightly de-censored the model under some conditions.

However, this model can be prone to bouts of madness.

It will not always behave, and it will sometimes go -wildly- off script.

See 4 examples below.

Model retains full reasoning, and output generation of a Qwen3 MOE ; but has not been tested for "non-creative" use cases.

Model is set with Qwen's default config:

40 k context

8 of 64 experts activated.

Chatml OR Jinja Template (embedded)

Four example generations below.

IMPORTANT:

See usage guide / repo below to get the most out of this model, as settings are very specific.

If not set correctly, this model will not work the way it should.

Critical settings:

Chatml or Jinja Template (embedded, but updated version at repo below)

Rep pen of 1.01 or 1.02 ; higher (1.04, 1.05) will result in "Harley Mode".

Temp range of .6 to 1.2. ; higher you may need to prompt the model to "output" after thinking.

Experts set at 8-10 ; higher will result in "odder" output BUT it might be better.

That being said, "Harley Quinn" may make her presence known at any moment.

USAGE GUIDE:

Please refer to this model card for

Specific usage, suggested settings, changing ACTIVE EXPERTS, templates, settings and the like:

How to maximize this model in "uncensored" form, with specific notes on "abliterated" models.

Rep pen / temp settings specific to getting the model to perform strongly.

https://huggingface.co/DavidAU/Qwen3-18B-A3B-Stranger-Thoughts-Abliterated-Uncensored-GGUF

GGUF / QUANTS / SPECIAL SHOUTOUT:

Special thanks to team Mradermacher for making the quants!

https://huggingface.co/mradermacher/Qwen3-22B-A3B-The-Harley-Quinn-GGUF

KNOWN ISSUES:

Model may "mis-capitalize" word(s) - lowercase, where uppercase should be - from time to time.

Model may add extra space from time to time before a word.

Incorrect template and/or settings will result in a drop in performance / poor performance.

Can rant at the end / repeat. Most of the time it will stop on its own.

Looking for the Abliterated / Uncensored version?

https://huggingface.co/DavidAU/Qwen3-23B-A3B-The-Harley-Quinn-PUDDIN-Abliterated-Uncensored

In some cases this "abliterated/uncensored" version may work better than this version.

EXAMPLES

Standard system prompt, rep pen 1.01-1.02, topk 100, topp .95, minp .05, rep pen range 64.

Tested in LMStudio, quant Q4KS, GPU (CPU output will differ slightly).

As this is the mid range quant, expected better results from higher quants and/or with more experts activated to be better.

NOTE: Some formatting lost on copy/paste.

WARNING: NSFW. Vivid prose. INTENSE. Visceral Details. Violence. HORROR. GORE. Swearing. UNCENSORED... humor, romance, fun.

Repository: localaiLicense: apache-2.0

nousresearch_hermes-4-14b

Hermes 4 14B is a frontier, hybrid-mode reasoning model based on Qwen 3 14B by Nous Research that is aligned to you.

Read the Hermes 4 technical report here: Hermes 4 Technical Report

Chat with Hermes in Nous Chat: https://chat.nousresearch.com

Training highlights include a newly synthesized post-training corpus emphasizing verified reasoning traces, massive improvements in math, code, STEM, logic, creativity, and format-faithful outputs, while preserving general assistant quality and broadly neutral alignment.

What’s new vs Hermes 3

Post-training corpus: Massively increased dataset size from 1M samples and 1.2B tokens to ~5M samples / ~60B tokens blended across reasoning and non-reasoning data.

Hybrid reasoning mode with explicit … segments when the model decides to deliberate, and options to make your responses faster when you want.

Reasoning that is top quality, expressive, improves math, code, STEM, logic, and even creative writing and subjective responses.

Schema adherence & structured outputs: trained to produce valid JSON for given schemas and to repair malformed objects.

Much easier to steer and align: extreme improvements on steerability, especially on reduced refusal rates.

Repository: localaiLicense: apache-2.0

gemma-3-27b-it

Google/gemma-3-27b-it is an open-source, state-of-the-art vision-language model built from the same research and technology used to create the Gemini models. It is multimodal, handling text and image input and generating text output, with open weights for both pre-trained variants and instruction-tuned variants. Gemma 3 models have a large, 128K context window, multilingual support in over 140 languages, and are available in more sizes than previous versions. They are well-suited for a variety of text generation and image understanding tasks, including question answering, summarization, and reasoning. Their relatively small size makes it possible to deploy them in environments with limited resources such as laptops, desktops or your own cloud infrastructure, democratizing access to state of the art AI models and helping foster innovation for everyone.

Repository: localaiLicense: gemma

gemma-3-12b-it

google/gemma-3-12b-it is an open-source, state-of-the-art, lightweight, multimodal model built from the same research and technology used to create the Gemini models. It is capable of handling text and image input and generating text output. It has a large context window of 128K tokens and supports over 140 languages. The 12B variant has been fine-tuned using the instruction-tuning approach. Gemma 3 models are suitable for a variety of text generation and image understanding tasks, including question answering, summarization, and reasoning. Their relatively small size makes them deployable in environments with limited resources such as laptops, desktops, or your own cloud infrastructure.

Repository: localaiLicense: gemma

gemma-3-4b-it

Gemma is a family of lightweight, state-of-the-art open models from Google, built from the same research and technology used to create the Gemini models. Gemma 3 models are multimodal, handling text and image input and generating text output, with open weights for both pre-trained variants and instruction-tuned variants. Gemma 3 has a large, 128K context window, multilingual support in over 140 languages, and is available in more sizes than previous versions. Gemma 3 models are well-suited for a variety of text generation and image understanding tasks, including question answering, summarization, and reasoning. Their relatively small size makes it possible to deploy them in environments with limited resources such as laptops, desktops or your own cloud infrastructure, democratizing access to state of the art AI models and helping foster innovation for everyone. Gemma-3-4b-it is a 4 billion parameter model.

Repository: localaiLicense: gemma

gemma-3-1b-it

google/gemma-3-1b-it is a large language model with 1 billion parameters. It is part of the Gemma family of open, state-of-the-art models from Google, built from the same research and technology used to create the Gemini models. Gemma 3 models are multimodal, handling text and image input and generating text output, with open weights for both pre-trained variants and instruction-tuned variants. These models have multilingual support in over 140 languages, and are available in more sizes than previous versions. They are well-suited for a variety of text generation and image understanding tasks, including question answering, summarization, and reasoning. Their relatively small size makes it possible to deploy them in environments with limited resources such as laptops, desktops or your own cloud infrastructure, democratizing access to state of the art AI models and helping foster innovation for everyone.

Repository: localaiLicense: gemma

gemma-3n-e2b-it

Gemma is a family of lightweight, state-of-the-art open models from Google, built from the same research and technology used to create the Gemini models. Gemma 3n models are designed for efficient execution on low-resource devices. They are capable of multimodal input, handling text, image, video, and audio input, and generating text outputs, with open weights for pre-trained and instruction-tuned variants. These models were trained with data in over 140 spoken languages.

Gemma 3n models use selective parameter activation technology to reduce resource requirements. This technique allows the models to operate at an effective size of 2B and 4B parameters, which is lower than the total number of parameters they contain. For more information on Gemma 3n's efficient parameter management technology, see the Gemma 3n page.

Repository: localaiLicense: gemma

gemma-3n-e4b-it

Gemma is a family of lightweight, state-of-the-art open models from Google, built from the same research and technology used to create the Gemini models. Gemma 3n models are designed for efficient execution on low-resource devices. They are capable of multimodal input, handling text, image, video, and audio input, and generating text outputs, with open weights for pre-trained and instruction-tuned variants. These models were trained with data in over 140 spoken languages.

Gemma 3n models use selective parameter activation technology to reduce resource requirements. This technique allows the models to operate at an effective size of 2B and 4B parameters, which is lower than the total number of parameters they contain. For more information on Gemma 3n's efficient parameter management technology, see the Gemma 3n page.

Repository: localaiLicense: gemma

google_medgemma-4b-it

MedGemma is a collection of Gemma 3 variants that are trained for performance on medical text and image comprehension. Developers can use MedGemma to accelerate building healthcare-based AI applications. MedGemma currently comes in three variants: a 4B multimodal version and 27B text-only and multimodal versions.

Both MedGemma multimodal versions utilize a SigLIP image encoder that has been specifically pre-trained on a variety of de-identified medical data, including chest X-rays, dermatology images, ophthalmology images, and histopathology slides. Their LLM components are trained on a diverse set of medical data, including medical text, medical question-answer pairs, FHIR-based electronic health record data (27B multimodal only), radiology images, histopathology patches, ophthalmology images, and dermatology images.

MedGemma 4B is available in both pre-trained (suffix: -pt) and instruction-tuned (suffix -it) versions. The instruction-tuned version is a better starting point for most applications. The pre-trained version is available for those who want to experiment more deeply with the models.

MedGemma 27B multimodal has pre-training on medical image, medical record and medical record comprehension tasks. MedGemma 27B text-only has been trained exclusively on medical text. Both models have been optimized for inference-time computation on medical reasoning. This means it has slightly higher performance on some text benchmarks than MedGemma 27B multimodal. Users who want to work with a single model for both medical text, medical record and medical image tasks are better suited for MedGemma 27B multimodal. Those that only need text use-cases may be better served with the text-only variant. Both MedGemma 27B variants are only available in instruction-tuned versions.

MedGemma variants have been evaluated on a range of clinically relevant benchmarks to illustrate their baseline performance. These evaluations are based on both open benchmark datasets and curated datasets. Developers can fine-tune MedGemma variants for improved performance. Consult the Intended Use section below for more details.

MedGemma is optimized for medical applications that involve a text generation component. For medical image-based applications that do not involve text generation, such as data-efficient classification, zero-shot classification, or content-based or semantic image retrieval, the MedSigLIP image encoder is recommended. MedSigLIP is based on the same image encoder that powers MedGemma.

Repository: localaiLicense: health-ai-developer-foundations

Page 1