Filter by type:

Filter by tags:

qwopus3.6-35b-a3b-v1

Repository: localaiLicense: apache-2.0

qwen3.5-9b-deepseek-v4-flash

Repository: localaiLicense: apache-2.0

kimi-k2.6

Repository: localaiLicense: modified-mit

qwopus3.6-27b-v1-preview

Repository: localaiLicense: apache-2.0

qwen3.6-27b

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b-claude-4.6-opus-reasoning-distilled

Repository: localaiLicense: apache-2.0

supergemma4-26b-uncensored-v2

Repository: localaiLicense: gemma

qwen3.6-35b-a3b-apex

Repository: localaiLicense: apache-2.0

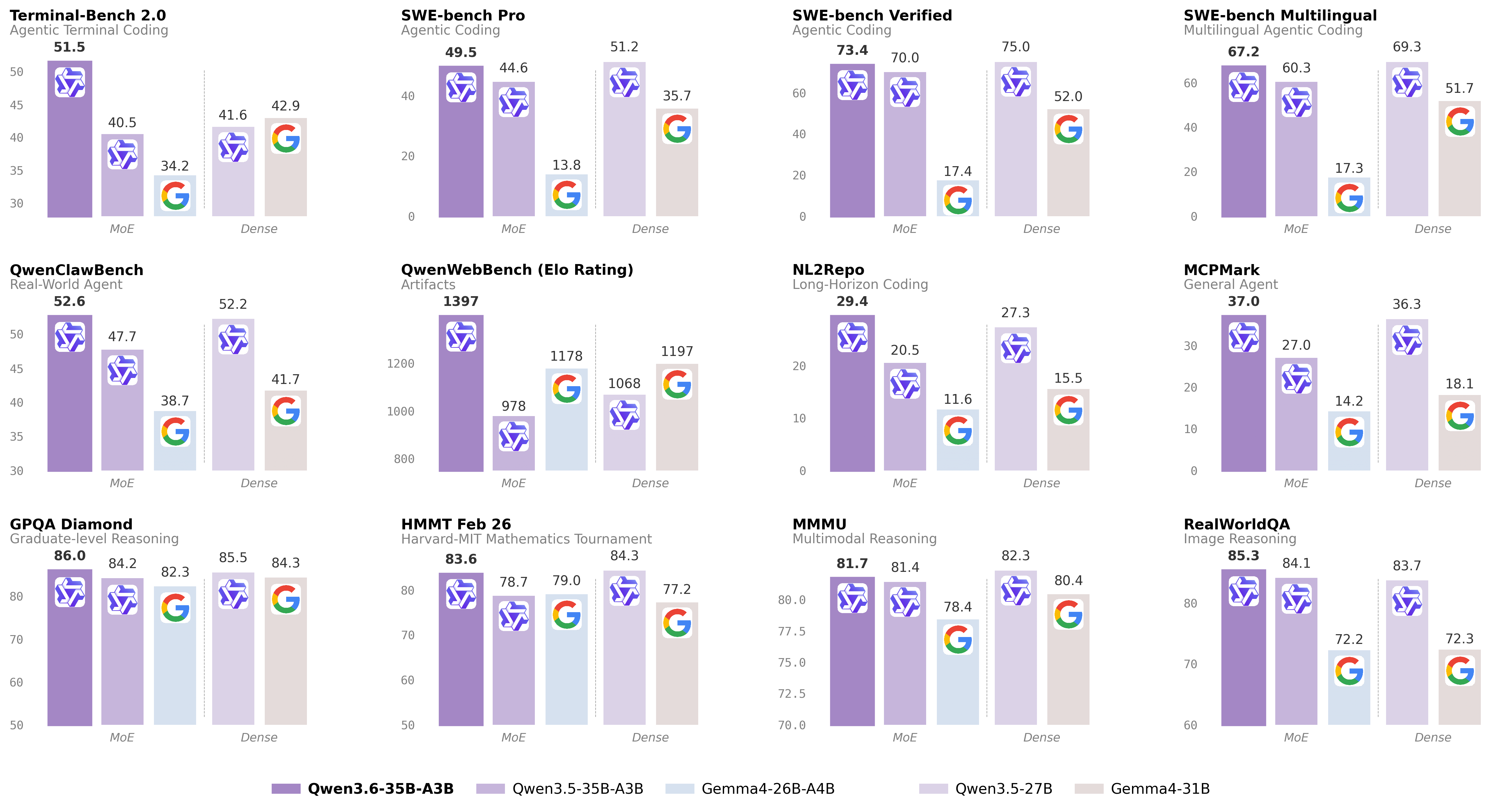

qwen3.6-35b-a3b

Repository: localaiLicense: apache-2.0

iquest-coder-v1-40b-instruct-i1

Repository: localaiLicense: iquestcoder

baidu_ernie-4.5-21b-a3b-thinking

Repository: localaiLicense: apache-2.0

openai_gpt-oss-20b-neo

Repository: localaiLicense: apache-2.0

openai-gpt-oss-20b-abliterated-uncensored-neo-imatrix

Repository: localaiLicense: apache-2.0

kwaipilot_kwaicoder-autothink-preview

Repository: localaiLicense: kwaipilot-license

qwen3-30b-a3b

Repository: localaiLicense: apache-2.0

qwen3-235b-a22b-instruct-2507

Repository: localaiLicense: apache-2.0

qwen3-coder-480b-a35b-instruct

Repository: localaiLicense: apache-2.0

qwen3-32b

Repository: localaiLicense: apache-2.0

qwen3-14b

Repository: localaiLicense: apache-2.0

qwen3-8b

Repository: localaiLicense: apache-2.0